What Is AWS EKS (Elastic Kubernetes Service)?

AWS EKS (Elastic Kubernetes Service) is designed to simplify the process of deploying, managing, and scaling Kubernetes clusters on AWS. It eliminates the need for customers to install, operate, and maintain their own Kubernetes control plane infrastructure. Instead, AWS handles these tasks, providing a fully managed, highly available, and secure Kubernetes control plane.

With EKS, customers can launch and manage Kubernetes clusters across multiple availability zones to achieve high availability and fault tolerance. EKS integrates with other AWS services, such as Elastic Load Balancing (ELB), Elastic Block Store (EBS), and Amazon Virtual Private Cloud (VPC), to provide a complete platform for running containerized applications on AWS.

EKS also supports the Kubernetes ecosystem, including tools like kubectl, Helm, and Operator Framework, enabling customers to easily manage their Kubernetes applications using their preferred tools and workflows.

This is part of an extensive series of guides about cloud security.

In this article:

- How Does Amazon EKS Work?

- AWS EKS: Architecture and Components

- AWS EKS Pricing

- AWS EKS Best Practices

How Does Amazon EKS Work?

Amazon EKS abstracts the complexity of managing the Kubernetes control plane to enable customers to focus on building and deploying their applications. Here is a general process for creating and managing Kubernetes clusters with EKS:

- Create a Kubernetes cluster: Customers can create an EKS cluster using the AWS Management Console, AWS CLI, or API. The EKS control plane is automatically deployed across multiple availability zones to provide high availability and fault tolerance.

- Launch nodes: Customers can launch worker nodes on AWS using EC2 instances. The worker nodes are connected to the EKS control plane and can run containerized applications using Kubernetes.

- Deploy applications: Customers can deploy containerized applications on the EKS cluster using Kubernetes.

- Scale and manage: Customers can use Kubernetes to scale and manage their containerized applications running on the EKS cluster.

- Monitor and troubleshoot: Customers can use Amazon tools to monitor and troubleshoot their EKS cluster and applications. EKS also provides native support for Kubernetes monitoring using tools like Prometheus and Grafana.

EKS vs. ECS: What is the difference?

ECS stands for Elastic Container Service, which is a fully managed container orchestration service provided by AWS. ECS allows customers to easily run, manage, and scale Docker containers on a cluster of EC2 instances, without having to manage the underlying infrastructure. While both EKS and ECS can be used to deploy and manage containers, they have some differences in their approach and capabilities, including:

- Architecture: EKS uses Kubernetes as its orchestration engine, while ECS has its own proprietary orchestration system. Kubernetes is an open-source container orchestration system that has a large and active community contributing to its development.

- Flexibility: EKS is more flexible and customizable than ECS, as Kubernetes offers a wide range of configuration options and a large ecosystem of plugins and extensions. ECS, on the other hand, is more tightly integrated with other AWS services and offers a more streamlined and simplified experience for those who are already using other AWS services.

- Scalability: Both EKS and ECS can scale up or down based on demand, but EKS can scale more easily across multiple regions and availability zones.

- Cost: ECS is generally less expensive than EKS, as it doesn’t require as much infrastructure and maintenance overhead. However, this may depend on the specific use case and workload.

- Ease of use: ECS is generally easier to use and set up than EKS, as it has a simpler interface and requires less knowledge of Kubernetes and container orchestration.

Learn more in our detailed guide to ECS vs EKS

AWS EKS: Architecture and Components

Architecture

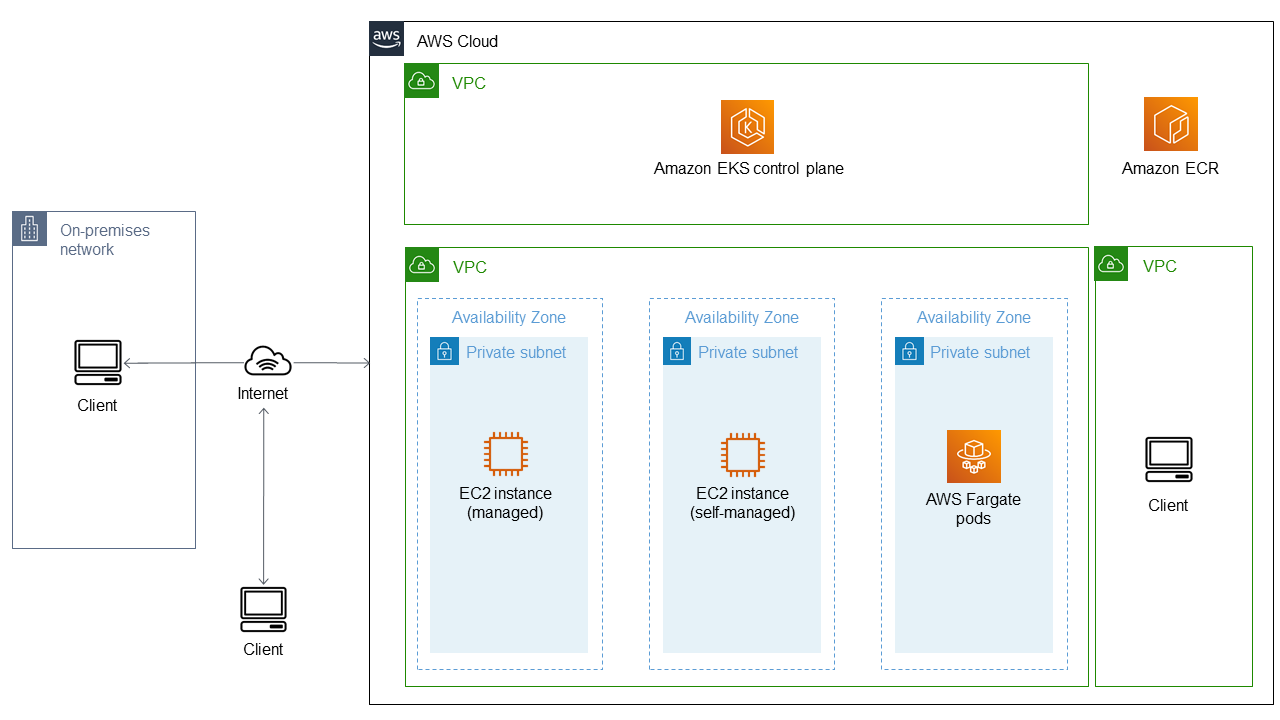

In AWS EKS, the architecture consists of two main components – the control plane and the data plane – that work together to provide a highly available, scalable, and secure platform. The control plane manages the overall state of the Kubernetes cluster, while the data plane runs the workloads on the cluster. Here is a diagram of the architecture:

Amazon EKS Clusters

An EKS cluster is a Kubernetes cluster that is managed by the EKS control plane. It consists of one or more Amazon EC2 instances that run Kubernetes nodes, which are connected to the EKS control plane via the Kubernetes API server. EKS clusters can span multiple Availability Zones for high availability and fault tolerance.

Amazon EKS Nodes

EKS nodes are EC2 instances that run Kubernetes pods. There are two types of EKS nodes: self-managed nodes and managed node groups.

Self-Managed Nodes

Customers can launch their own EC2 instances and configure them to run as Kubernetes nodes in an EKS cluster. Self-managed nodes give customers more control over the EC2 instances but require more management overhead.

Managed Node Groups

Customers can also create managed node groups using the EKS console, CLI, or API. Managed node groups automatically provision and manage EC2 instances that run as Kubernetes nodes in an EKS cluster. They provide a simplified and scalable way to manage nodes in the cluster.

Amazon EKS Networking

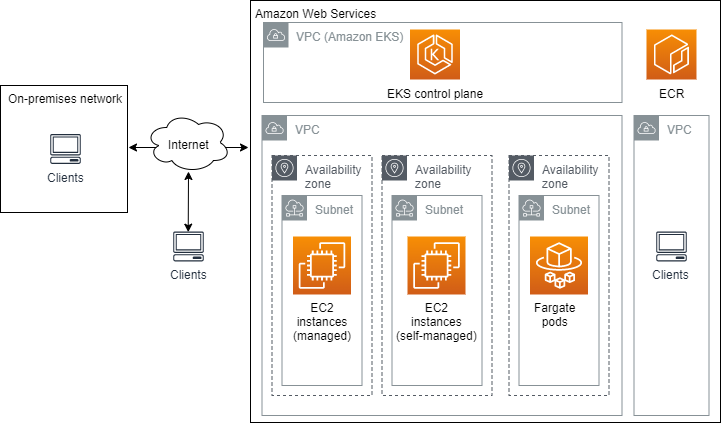

Here is a diagram that shows the main components of an EKS cluster in relation to a VPC:

EKS uses Amazon VPC and subnets to provide networking for the EKS cluster. The EKS Control Plane and worker nodes are launched in separate subnets for security and isolation. The EKS Control Plane is accessible from within the VPC, and worker nodes can communicate with the control plane and each other over the VPC network. Here is how it works:

Amazon VPC and Subnets

Amazon EKS clusters are deployed in a VPC that provides network isolation and security for the cluster. The VPC is divided into subnets that span multiple Availability Zones, providing high availability and fault tolerance. Customers can configure the VPC and subnets to meet their specific security and networking requirements.

Amazon EKS Control Plane

The EKS Control Plane is the Kubernetes control plane that manages the Kubernetes API server, etcd, and other control plane components. The control plane runs in multiple Availability Zones for high availability and fault tolerance. It is deployed in private subnets, which are not directly accessible from the internet. Customers can access the control plane using the Kubernetes API server endpoint, which is a private DNS name that resolves to the IP address of the control plane in the VPC.

Amazon EC2 Instances

EKS uses EC2 instances to launch worker nodes that run Kubernetes pods. These EC2 instances can be part of self-managed nodes or managed node groups. The worker nodes are launched in private subnets and can communicate with the EKS Control Plane over the VPC network. Customers can configure security groups and network access control lists (ACLs) to control traffic flow to and from the worker nodes.

Learn more in our detailed guide to EKS on EC2 Graviton2 instances

AWS EKS Pricing

AWS EKS pricing includes two main components:

- Cluster management pricing: This includes a fixed hourly rate per cluster for EKS control plane management. As of 2023, the hourly rate is $0.10 for each EKS cluster in the region. This fee is prorated by the second and billed on a monthly basis.

- Worker node pricing: EKS worker node pricing depends on the type and number of EC2 instances that customers use. The service allows using on-demand instances or reserved instances to reduce costs. In addition to the EC2 instance cost, there is also a charge for EKS worker nodes that are registered with the cluster, which is $0.10 per hour per registered node.

Other factors that may affect EKS pricing include the amount of data transfer and storage used by the cluster, as well as any additional AWS services used in conjunction with EKS.

It’s important to note that EKS pricing can vary depending on the region, instance type, and other factors. Customers can use the AWS pricing calculator to estimate the cost of running an EKS cluster based on specific requirements.

EKS Fargate Pricing

Here is how this service is billed:

- Pricing is based on the vCPU and memory resources consumed by pods.

- The cost of running Fargate tasks is in addition to the cost of running the EKS control plane.

- The cost per vCPU-hour and per GB-hour varies by region and is subject to change.

Learn more in our detailed guide to EKS vs Fargate (coming soon)

AWS Outposts

AWS Outposts is a service that allows Amazon customers to run AWS resources within their on-premises data center. Key points about using this pricing model for EKS:

- Pricing for EKS on Outposts is the same as regular EKS, with the addition of Outposts data transfer fees.

- Customers are charged for the Outposts infrastructure resources, such as compute and storage, and the data transfer fees.

- The cost of running EKS on Outposts varies based on the number and type of instances deployed.

EKS Anywhere Pricing

EKS Anywhere lets customers use their own infrastructure. Here are important aspects to consider before choosing this pricing model:

- The cost of running EKS Anywhere depends on the underlying infrastructure used and any additional services that might be needed.

- Customers need to provide their own compute, storage, and networking resources, which may include purchasing hardware or using a cloud provider.

- Customers might need to purchase software licenses or pay for any external services used with EKS Anywhere.

- There is no additional cost for the EKS Anywhere software itself, as it is open-source and available for free.

Learn more in our detailed guide to EKS pricing

AWS EKS Best Practices

Use Spot Instances

Using AWS Spot instances for EKS worker nodes can help you reduce the cost of running your applications on EKS while maintaining high availability and performance. AWS Spot instances are spare EC2 instances that can be purchased at a significant discount compared to On-Demand instances.

Using AWS EKS spot instances can provide several benefits for running your containerized applications on AWS:

- Flexible pricing: Spot instance prices can fluctuate based on supply and demand. This means that you can take advantage of lower prices during periods of low demand and scale up your capacity when the demand increases. This can help you optimize your infrastructure costs and reduce your overall spend on AWS.

- High availability: AWS EKS supports Spot instances in Auto Scaling Groups (ASGs), which allows you to ensure high availability for your applications. You can configure the ASGs to launch and maintain a desired number of Spot instances across multiple availability zones, ensuring that your applications remain available even if some Spot instances are terminated.

- Diversification: AWS EKS supports running Spot instances on multiple instance types, sizes, and availability zones. By using a diversified Spot fleet, you can increase the chances of finding available capacity for your Spot instances and reduce the risk of interruptions due to price fluctuations or capacity constraints.

Use Multiple Availability Zones

To ensure high availability and resiliency, it is recommended to distribute your EKS worker nodes across multiple availability zones. This will help ensure that your applications remain available in case of a failure or outage in one availability zone. You can use an Auto Scaling Group (ASG) to launch worker nodes in multiple availability zones.

To ensure that your worker nodes are distributed across multiple availability zones, you can use the “maxSize” parameter when creating the ASG. Set the “maxSize” parameter to be greater than the number of nodes that you want in a single availability zone. This will cause the ASG to launch nodes in additional availability zones once the maximum number of nodes in a single availability zone is reached.

Leverage Autoscaling

Autoscaling allows you to automatically scale your EKS cluster based on the demand for resources. This ensures that your cluster is always optimally sized for your applications’ needs, and you don’t waste resources on idle nodes. You can use Amazon EKS Cluster Autoscaler to scale worker nodes based on the demand for resources.

The EKS Cluster Autoscaler automatically adjusts the size of your ASG based on the resource utilization of your cluster. When a pod cannot be scheduled due to insufficient resources, the Autoscaler will launch additional worker nodes to meet the demand. When the demand decreases, the Autoscaler will terminate the unneeded worker nodes.

Learn more in our detailed guide to EKS autoscaling

Secure EKS Clusters

Securing your EKS cluster is critical to protect your applications and data. AWS provides several security best practices that you can follow to secure your EKS cluster, including:

- Securing your cluster API server endpoint: Use an AWS PrivateLink or VPN to secure your cluster API server endpoint.

- Encrypting your data at rest: Use AWS EBS encryption or AWS KMS encryption to encrypt your data at rest.

- Using IAM policies to control access to your cluster resources: Use IAM roles and policies to grant least privilege access to your EKS cluster resources.

- Enabling Kubernetes RBAC: Use Kubernetes Role-Based Access Control (RBAC) to control access to Kubernetes resources within your cluster.

Ensure availability and optimize Amazon Elastic Kubernetes Service with Spot

Spot’s portfolio provides hands-free Kubernetes optimization. It continuously analyzes how your containers are using infrastructure, automatically scaling compute resources to maximize utilization and availability utilizing the optimal blend of spot, reserved and on-demand compute instances.

- Dramatic savings: Access spare compute capacity for up to 91% less than pay-as-you-go pricing

- Cloud-native autoscaling: Effortlessly scale compute infrastructure for both Kubernetes and legacy workloads

- High-availability SLA: Reliably leverage Spot VMs without disrupting your mission-critical workloads

Learn more about how Spot supports all your Kubernetes workloads.

See Additional Guides on Key Cloud Security Topics

Together with our content partners, we have authored in-depth guides on several other topics that can also be useful as you explore the world of cloud security.

Azure Kubernetes Service

Authored by Spot

- [Guide] Getting started with Azure Kubernetes Service (AKS)

- [Guide] AKS Pricing Models Compared and 5 Ways to Cut Your Costs

- [Blog] Pros and Cons of Spark on Kubernetes

- [Product] Spot Ocean | Kubernetes Infrastructure Management

What is MDR

Authored by Cynet

- [Guide] What Is Managed Detection and Response? (MDR)

- [Guide] EDR vs MDR: How They Compare and the XDR Connection

- [Guide] MDR Solutions: Why They are Critical and How to Choose

- [Product] Cynet 24×7 Security Center | Managed Detection and Response (MDR)

CNAPP

Authored by Tigera