Modern, cloud-based software development lifecycles have quickly evolved from waterfall and are fully embracing the agile principles of DevOps. As part of this shift, continuous delivery practices have been adopted, giving organizations the capability to deliver and release code faster and more frequently than ever before. CI/CD tools bring velocity — code is always ready to be deployed, enabling organizations to commit multiple times a day. But how do you ship code dozens, hundreds or even thousands of times a day without impacting the end user?

Enter progressive delivery.

What is progressive delivery?

Progressive delivery brings control to delivery processes and CI/CD pipelines, enabling users to choose when and how new code is being delivered. It builds upon the practices of CI/CD to maintain speed of development and delivery, while decreasing the risk of breaking things by gradually rolling out deployments. With progressive delivery, which includes blue/green and canary deployment strategies, code is rolled out to a small number of users to ensure quality before releasing to the wider user base. If something goes wrong, the application can easily be rolled back, and the majority of users won’t know a thing.

Progressive delivery and Kubernetes

While progressive delivery improves the reliability and stability of deployments, applying it in complex microservice environments doesn’t come easy. Built as a container orchestrator, not a deployment system, Kubernetes requires some manual work to execute progressive delivery. Kubernetes default rolling update strategy is simple to deploy but less granular control over user traffic and rollback when compared to more advanced deployment methods like blue/green and canary. Critical phases of CD are still not fully automated, leaving DevOps to push code to production manually through opaque pipelines.

With these challenges it’s clear that a new breed of Kubernetes-first CD tools is needed. Which was what the team at Argo Projects did when they released v.1 of Argo Rollouts, an open source Kubernetes controller for GitOps-based progressive delivery. Argo Rollouts is designed to replace the default Kubernetes rollout strategies with more advanced deployments, and is programmable to fit a wide range of use cases. Argo Rollout takes a developer-oriented approach and is designed to be intuitive, giving users ways to reduce management overhead:

- Argo Rollouts exist within the Kubernetes cluster and do not require credentials and permissions to be set outside of the cluster.

- Users can define deployment strategy steps for canary and blue/green deployments

At Spot, we were excited to evaluate this tool and share our learnings with our customers and the Kubernetes community. Using an argocd-demo application, our DevOps engineers and developers started with installing Argo Rollouts for a single cluster on AWS.

Installation and Getting Started

Single cluster installation :

See here for detailed installation instructions

Argo Rollouts is installed and managed in a Kubernetes-native way, and introduces a new Kubernetes CRD for a rollout entity. It has the same functionality as a Kubernetes Deployment entity, but the Argo Rollouts will monitor for changes and can control the rollout. Users can define a specific rollout strategy, which can be configured with a manifest file.

An example of a Rollout manifest file with canary strategy:

apiVersion: argoproj.io/v1alpha1

kind: Rollout

metadata:

name: canary-demo

spec:

replicas: 5

revisionHistoryLimit: 1

selector:

matchLabels:

app: canary-demo

template:

metadata:

labels:

app: canary-demo

spec:

containers:

- name: canary-demo

image: argoproj/rollouts-demo:blue

imagePullPolicy: Always

ports:

- name: http

containerPort: 8080

protocol: TCP

resources:

requests:

memory: 32Mi

cpu: 5m

strategy:

canary:

canaryService: canary-demo-preview

steps:

- setWeight: 20

- pause: {}

- setWeight: 40

- pause: {duration: 10}

- setWeight: 60

- pause: {duration: 10}

- setWeight: 80

- pause: {duration: 10}

The installation process is convenient and simple, supported with most standard package management tools. After installation, Argo Rollouts runs on Kubernetes in its own namespace and all configuration is stored in Custom Resources, ConfigMaps and Secrets which serve as credentials to the Kubernetes cluster itself. Using the Kubernetes CLI, kubectl, you can ensure that all the components are up and running.

Multi Cluster Support

For companies like Spot that manage multiple clusters in different regions, it’s important to be able to control deployments. To do so, visibility, monitoring and observability is critical. Argo Rollouts has no centralized way of monitoring the state of multiple Argo rollout clusters out-of-the-box, and has limited visibility into the cluster state both during and after the rollout. The highest logical entity that can be visualized is namespace, limiting the users ability for multi-cluster management in one place.

With no simple way to achieve this, users may script or develop their own tools, so essentially are required to query information from Argo and output it to a monitoring system, or build their own dashboard to follow the processes of deployment.

Argo Rollout Controller

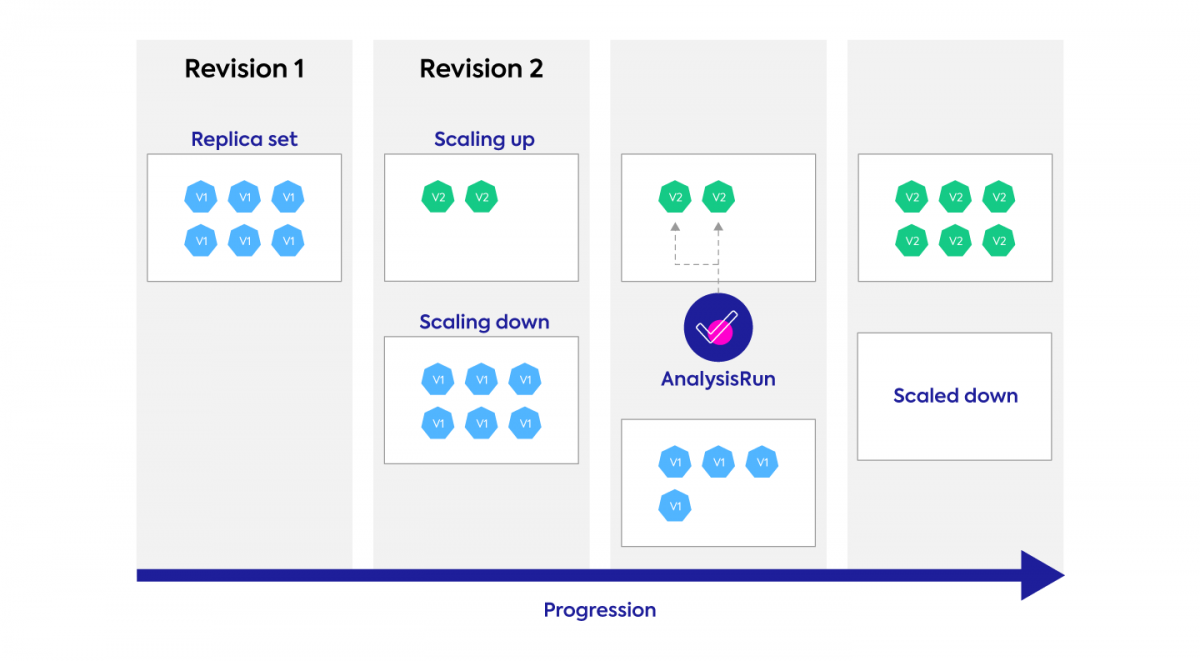

The Argo Rollout Controller is the unit that orchestrates the actual deployment of desired deployment strategies. It wraps the deployment CRD in Kubernetes and manipulates ReplicaSets to reflect the desired state of the Kubernetes resource. A rollout CRD is intended to act as a declarative replacement for a standard Kubernetes Deployment. Much like a Kubernetes Deployment CRD, it is possible to use a selector in the manifest that will specify what pods it should manage, along with the number of pod replicas to maintain. It is the ReplicaSet controller’s job to add or remove pods as necessary to match the desired replicas as configured in the manifest.

Rollout Strategies

The two most prominent rollouts strategies are canary and blue/green, both of which are supported with Argo Rollouts. For this blog post, we’re focusing on canary deployments which has become a popular deployment method for the control it gives the user.

Canary deployment strategies gradually redirect some of an application’s traffic from an older version to a deployed newer version. To minimize the blast radius should something go wrong, the release is tested with a small pool of real traffic before rolling out to a larger population. Organizations can choose how to define their canary deployments, whether it is slow and gradual, or swift and sudden. In order to control traffic to the application, an ingress controller like Istio can be used. You can choose users with specific characteristics and route them to the newly deployed version while others are trafficked to the older version.

Continuous Verification

Another important aspect of a progressive deployment is testing. When real traffic starts to flow to the newly deployed version of the application, continuous verification will alert you to any unexpected behavior and enable you to quickly rollback.

Knowing what to test and when to react, however, requires more engineering effort than anything else with canary. Argo allows you to configure tests and set triggered behaviors, but it’s up to you to decide what to test, and when to test, and for how long.

A best practice for continuous verification is to set an experiment and test the rollout. The Experiment CRD in Argo allows users to have ephemeral runs of one or more ReplicaSets. In addition to running ephemeral ReplicaSets, the Experiment CRD can launch AnalysisRuns alongside the ReplicaSets. Generally, AnalysisRun is used to confirm that new ReplicaSets are running as expected.

Once a canary deployment starts, continuous testing can also be performed to test the new release before it reaches all user populations. While canary is running and starting to accept production traffic, monitoring for latency and application errors will help to determine if the deployment should progress or rollback.

Conclusion

Argo Rollouts is a popular choice in the landscape of Continuous Delivery, and there are many aspects that Argo Rollouts excels in. For developers, it enables deployment processes to be handled as code, while it can reduce operational overhead for DevOps. With flexible deployment processes, Argo Rollouts has capabilities that may improve the overall experience and availability of deployed services. It is easy to install and manage, and can be a part of the Kubernetes cluster itself, but aspects of observability into the deployment processes are limited.

Argo Rollouts has helped to usher in a Kuberntes-centric approach to progressive delivery, and now Spot is bringing our experience in Kubernetes to the CI/CD landscape with Ocean CD. Recently announced, OceanCD is a fully managed SaaS offering that provides end-to-end control and standardization for frequent deployment processes of Kubernetes applications at scale. With Ocean CD, Spot has built a developer-centric, Kubernetes-native solution that will simplify release processes and provide full support of progressive delivery with automated deployment rollout and management.