Selecting the right infrastructure at the right time for your Azure Kubernetes Services (AKS) cluster is not an easy decision. Your AKS cluster has hundreds of dynamic workloads that expand or contract based on application needs. You need to scale up quickly when the application demands it and scale down when resources are not needed to reduce cloud costs.

Are you constantly wondering if you are making the right node selections for your AKS cluster with hundreds of applications?

- Spot VMs? Select Spot or regular (on-demand) nodes? Spot VMs will save costs, but what if there are interruptions?

- Too aggressive? Select nodes with the exact resources needed? Saves on costs but leaves no room for expansion, and might be too slow to scale up when there is spike?

- Too cautious? Select nodes with larger capacity? Faster scaling up during workload spikes but risk low resource utilization and higher monthly costs?

The solution: Spot Ocean AKS, which optimizes your cluster for both service availability and cost.

Spot Ocean AKS is here

Spot Ocean is the market leader in Kubernetes cloud optimization and Ocean AKS brings an enterprise-ready serverless engine to Azure.

Ocean AKS has been developed in partnership with Microsoft and is tightly integrated with standard AKS building blocks (node pools) and native AKS API (node pool and VMSS API).

Ocean AKS: Look under the hood

Ocean AKS solves many of the challenges that come with building applications with Kubernetes: optimizing performance, reliability, and cost-efficiency; automating and simplifying infrastructure management; and improving continuous deployment of applications at scale.

Let’s review what makes Spot Ocean the best solution in the market for AKS cloud optimization:

Enterprise-grade: Deploy with confidence

Spot Ocean is a multi-cloud SaaS solution that allows you to use the same UI and API on all the major cloud providers. This ensures your teams experience the same interfaces and have a common visual understanding, saving you the burden of managing multiple tools with different levels of maturity.

Ocean AKS delivers secure, reliable, and highly available (uptime SLA 99.99%) AKS cluster infrastructure control. Spot Ocean is ISO 27001-certified and holds the SOC 2 Type II certification.

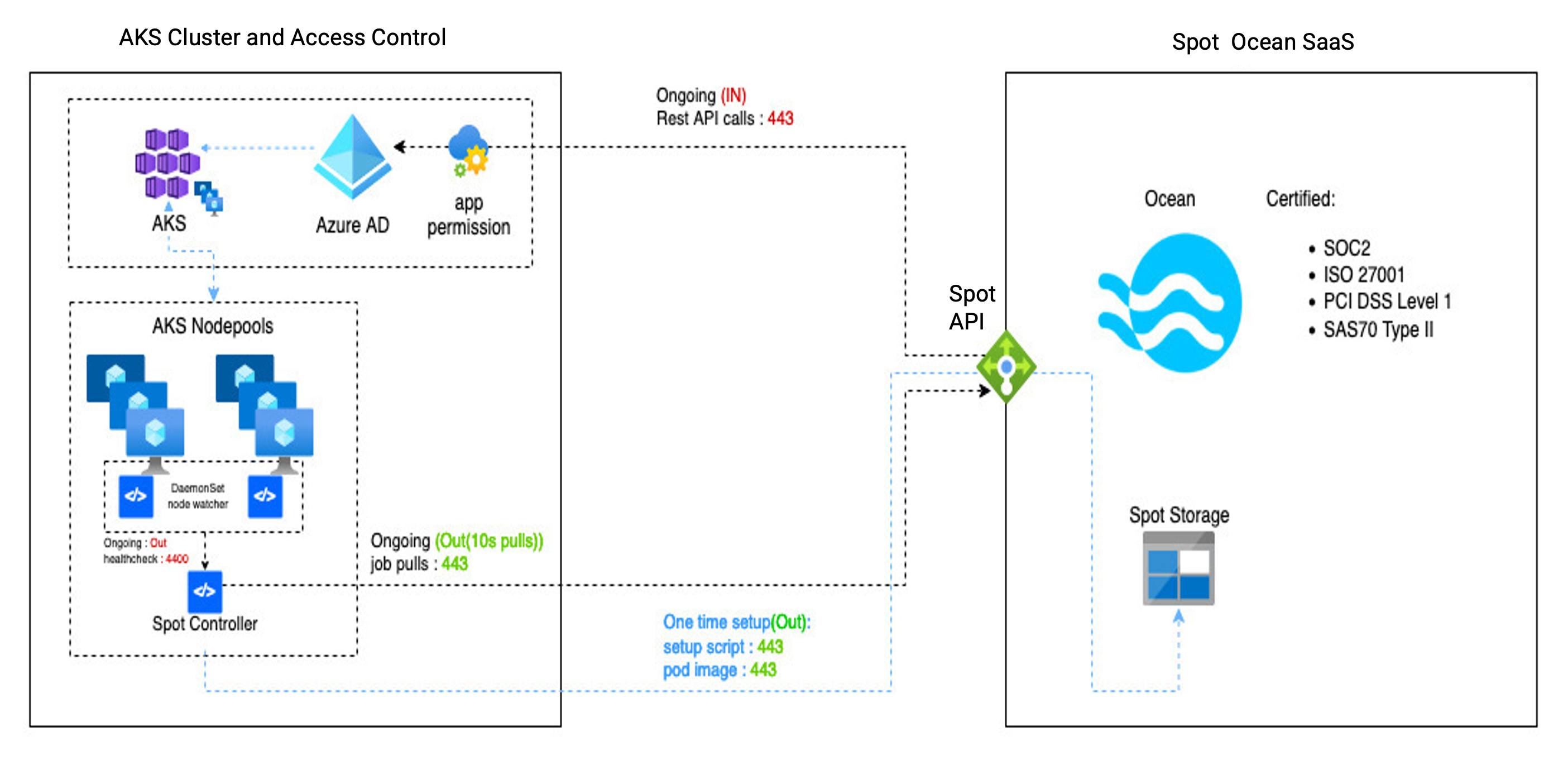

To run Ocean AKS, you need to install Ocean controller in your AKS cluster. The Ocean controller requires only limited (read-only) permissions to query cluster resources and additional (optional) permissions to drain and delete nodes to perform scale-down operations. If you enable Ocean for Apache Spark for Big Data applications on AKS, additional (optional) permissions are needed to update sparkOperator resources (sparkApplications).

Ocean AKS has no access to your application data, and your data remains within your cluster. Only metadata on workload state (pending or running, resource utilization, replica count) and workload configuration (controller type, pod name, resource requests/limits, Labels and Tolerations, constraints) are sent to the Ocean AKS engine to make scaling decisions.

All communication between Ocean AKS engine and AKS cluster is encrypted using HTTPS (port 443). Ocean AKS uses application-level role-based access control (RBAC) with centralized authorization and JSON Web Token (JWT) to identify authenticated users.

Ocean AKS high-level architecture is shown below:

Ocean AKS has enhanced observability (status, logs, events, alerts, metrics) to help find and debug cluster health and scaling issues more quickly.

Spot Support has industry-leading customer satisfaction with 24/7 support (three locations worldwide) and expertise on Azure cloud and AKS. You can easily contact support and open a ticket via Slack from within the Ocean UI console.

Spot NOC does proactive operations management to alert you when there are red flags (misconfiguration, controller down), or when you are about to exceed your Azure resource-group quotas (Spot quota or GPU quota limits), or when you experience Azure API throttling in your AKS cluster.

Serverless AKS: You bring the workload, Ocean brings the nodes

Spot Ocean provides a truly serverless AKS experience and removes the complexity of managing AKS worker nodes with smart-AI and automation for node selection and zero-touch provisioning.

This allows you to focus on your AKS application needs and leave the automation of the AKS infrastructure to Ocean. You can deliver more application services quicker with Ocean Serverless AKS engine.

Easy onboarding: Import your cluster into Ocean in 5 minutes

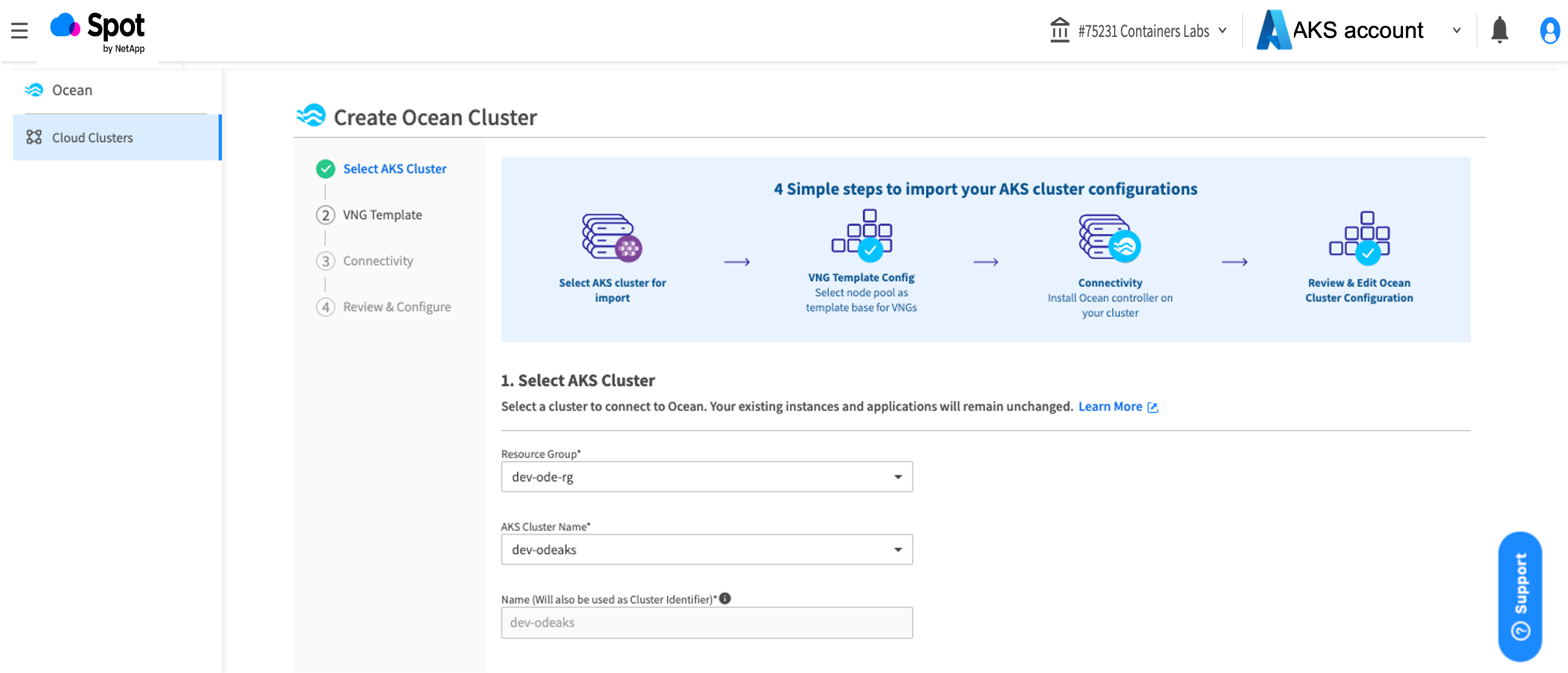

Spot Ocean provides step-by-step Azure onboarding wizard, to create a Spot Account, connect your Azure account to Spot and lastly create a new Access Control (IAM) Custom Role with minimal resource group-level permissions to launch and manage nodes in your AKS cluster.

Spot Ocean also offers Infrastructure as Code (IaC) with Terraform module azure-connect to accomplish this.

Next you will need to import your existing AKS clusters. Spot Ocean offers the Create Ocean Cluster Wizard to import your AKS cluster, define a VNG Template, and create a VNG (Virtual Node Group).

Use Infrastructure as Code (IaC) with Terraform provider and modules to create and manage your Ocean Cluster ocean-aks-np and VNGs ocean-aks-np-vng configurations.

Ocean AKS supports importing and managing an AKS Private cluster, where the cluster is located behind a firewall, does not have Public IP, and can only be reached via proxy, VPN, or Express Route. The private cluster has no external Internet access, and all Kubernetes API communication must be over private network via VPN or proxy.

High performance scaling: Zero to launch (nodes) in under 2 minutes

Ocean AKS provides you the right-sized nodes in the right place at the right time, so your applications are always running with minimum downtime.

Ocean AKS supports typical Kubernetes workload scaling constraints, like node selectors, node affinity, pod affinity/anti-affinity, pod topology spread constraints, pod disruption budget and will perform scaling based on the constraints defined in the workload or pod manifest.

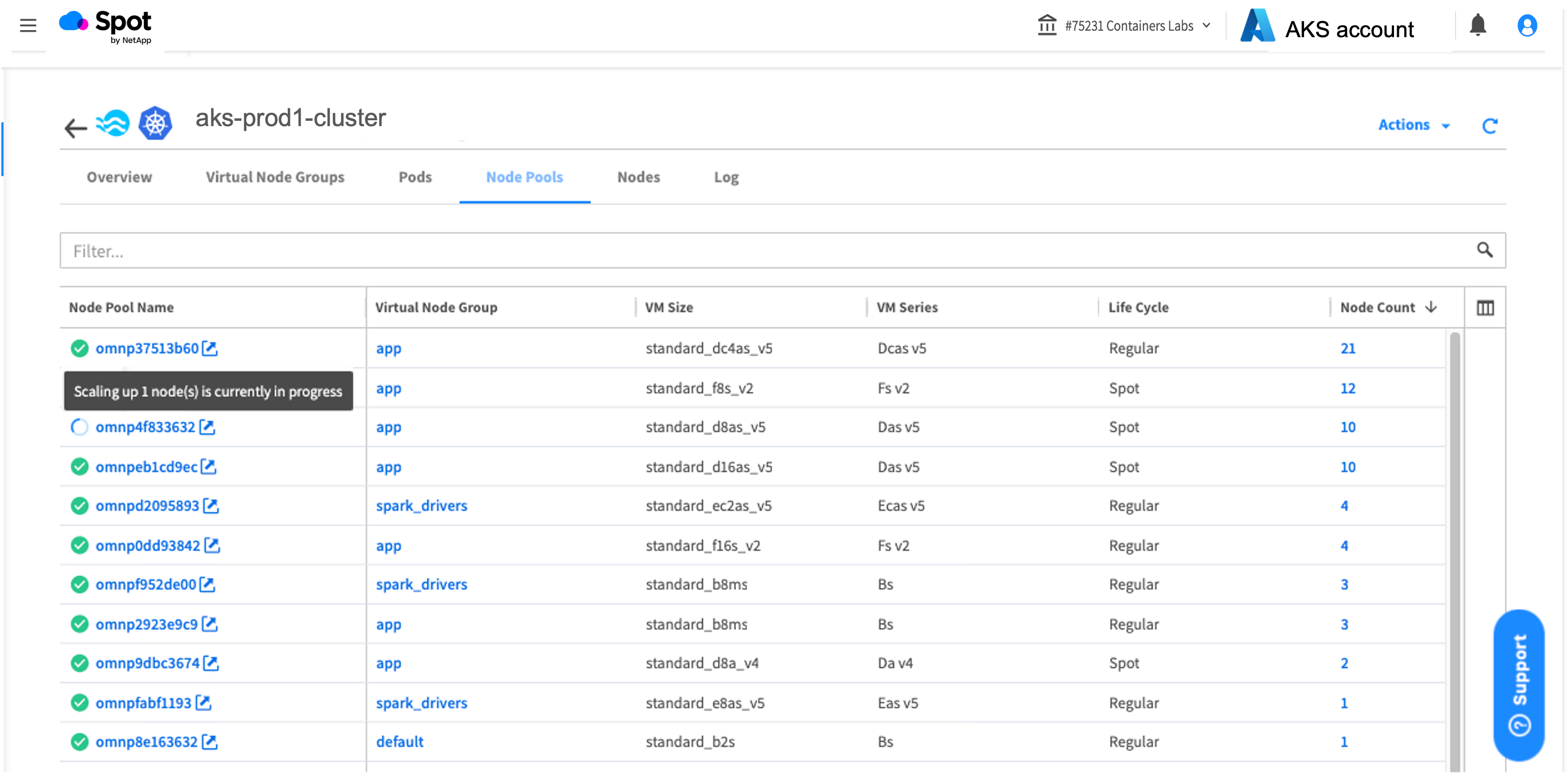

When there are pending pods that can’t be scheduled, the Ocean AKS engine gets into scale-up action, launching multiple nodes of different VM sizes and SKUs in different node pools and spreading nodes across availability zones. Go from pending to running pods in under two minutes.

During peak intervals when there is high demand, the Ocean AKS engine goes into full throttle to scale up 20-50 nodes at a time using multiple node pools (VM SKUs and VM sizes).

During non-peak intervals, when there is excess capacity and nodes are underutilized, Ocean performs Bin-Packing and scales down underutilized nodes.

For workloads with a PersistentVolumeClaim (PVC) for managed Azure disks of type LRS (locally redundant storage), Ocean AKS will launch nodes in the availability zone (AZ) where the persistent volume (PV) Azure LRS Disk is mounted.

Go all out on Spot VMs and don’t let interruptions hold you back

With Ocean AKS you can deploy mission critical applications on Spot VMs in your production clusters and achieve up to 90% in cost savings — reducing your Azure cloud operational expenses (OpEx) and Total Cost of Ownership (TCO).

Ocean gracefully handles interruptions with minimum downtime for workloads. When Ocean gets notified of an Azure node interruption 30 seconds before an actual event, it immediately attempts to safely drain the node and gracefully evict pods over 15 to 30 seconds, while proactively launching replacement node(s) for the pending pods to minimize downtime. Ocean distributes workloads across many different node pools and VM sizes/SKUs, so that if there is an interruption for a VM SKU, only a small percentage of nodes and workloads will be affected.

Ocean AKS enables you to easily deploy workloads on Azure Spot VMs (dynamically inject Toleration for Spot VMs) and Fallback to Regular nodes if a Spot VM is unavailable.

You can have AKS clusters with mixed lifecycles (Spot and Regular nodes) and play with the Spot Percentage to get the right distribution of cost savings and availability for your workloads.

For workloads that need lifecycle based topology spread constraints, spread pods across Spot and Regular (on-demand) nodes. You can use Spot Label node-lifecycle and set topology key: spotinst.io/node-lifecycle, then Ocean AKS will ensure that pod replicas are spread across both Spot VMs and Regular (on-demand) nodes based on maxSkew defined in the workload manifest.

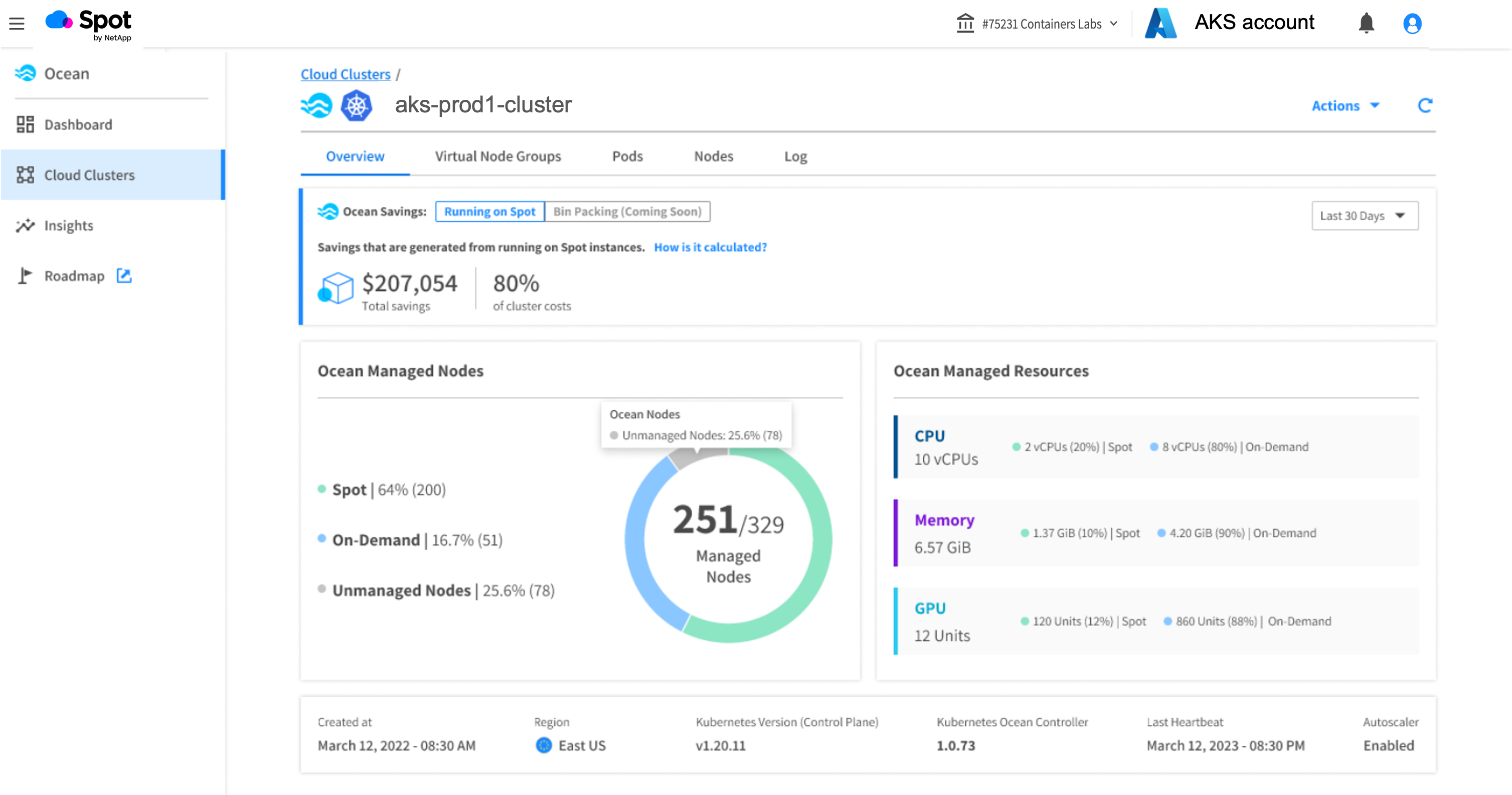

With Spot Savings, you can view and track savings running on Spot VMs in your Ocean AKS cloud. This can be viewed in the Overview tab of Ocean Console.

Way more than a Kubernetes auto-scaler

Ocean AKS is easy to deploy, and you can get it running in no time. But if you want to customize your infrastructure or fine-tune the scaling for a specific workload, Ocean is feature-packed with lots of customization tools.

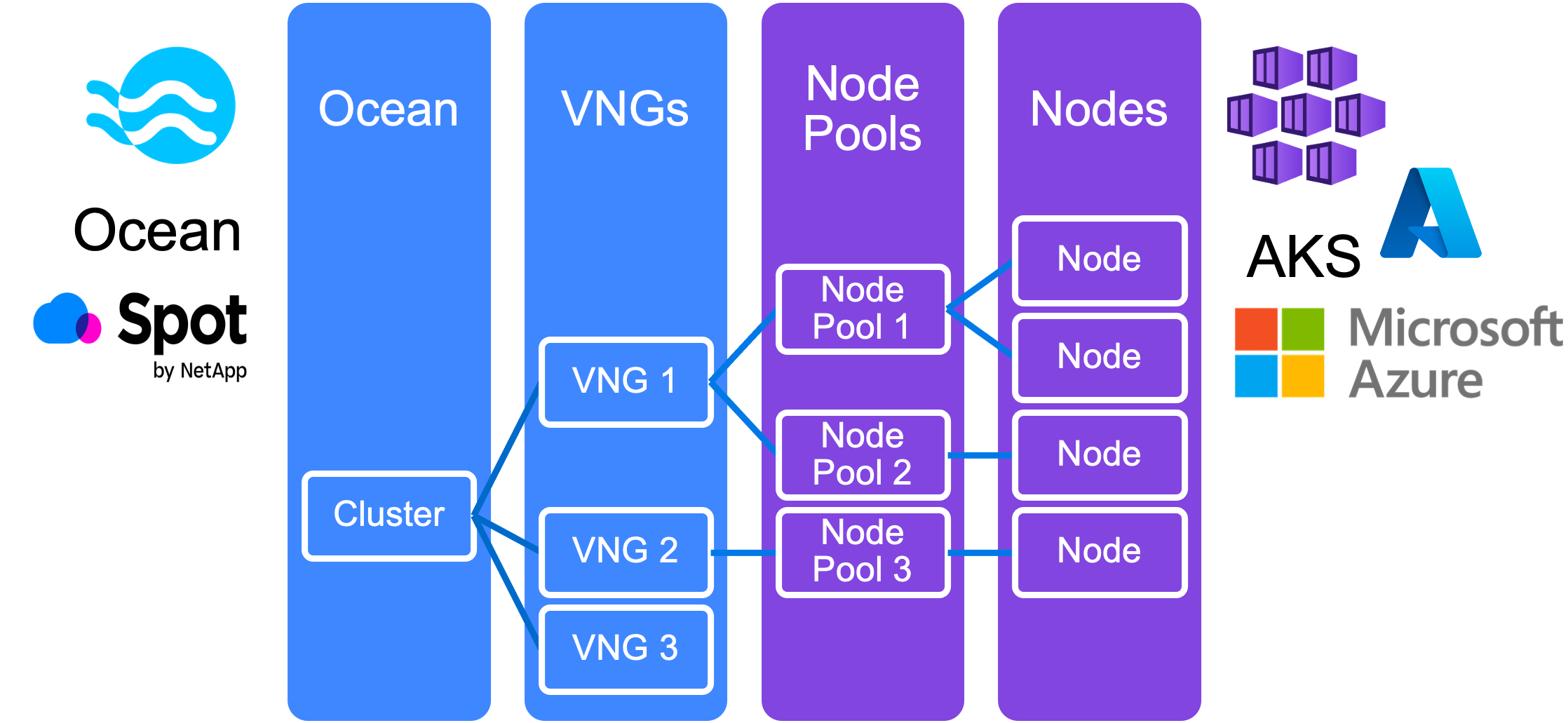

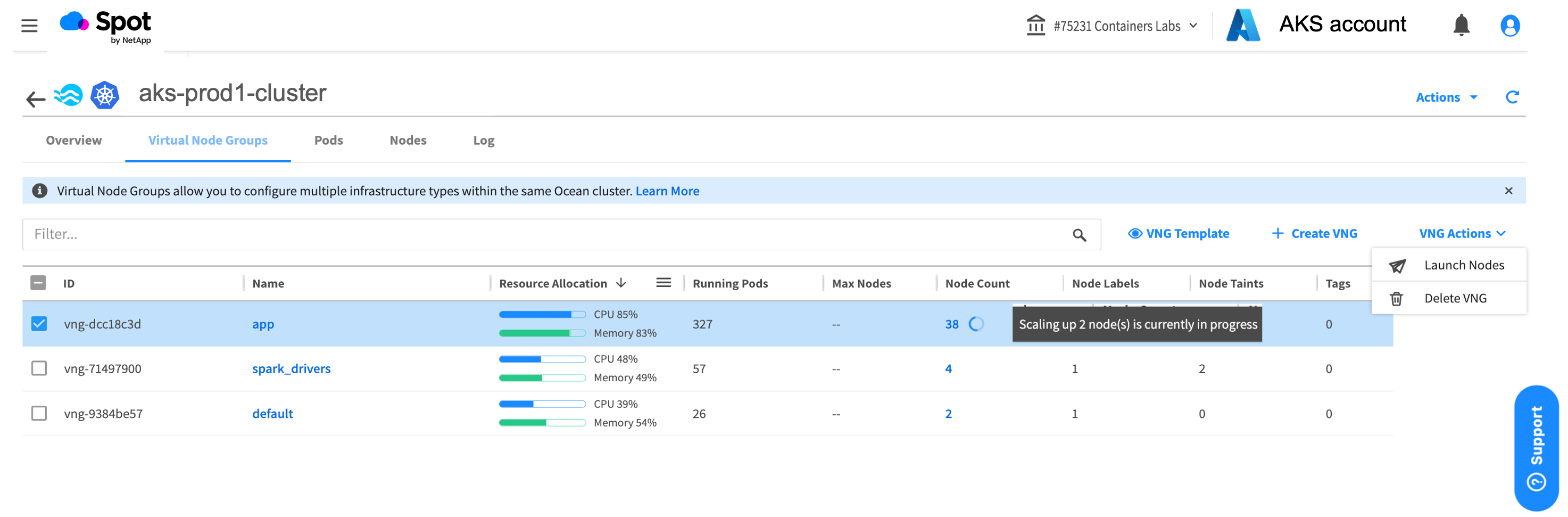

VNGs (Virtual Node Groups) – VNG is a grouping of nodes with common configuration and scaling parameters. VNGs allow you to build custom scaling profiles to filter and provision nodes. VNG defines the guardrails that you provide the Ocean AKS engine to select and provision the right nodes for your workloads. A VNG will have multiple node pools, to spread workloads across different VM SKUs and VM sizes.

In creating a VNG for workloads that need specific hardware or custom scaling, consider these examples:

- AI/ML workloads that require Nvidia GPU nodes with special Labels and Taints, so that other workloads cannot be deployed on these nodes

- Low latency Apache Spark Big Data workloads need local ephemeral Disks and additional Headroom (spare capacity) to scale up faster when there is usage spike

- High performance computing (HPC) workloads that require Intel X86 3rd Gen Xeon processors Ice Lake or AMD64 EPYC

- Edge computing that requires light weight ARM64 processors with limited CPUs (2-16 vCPUs)

- Apache Spark drivers cannot be interrupted and must always run on regular (on-demand) nodes but workers can run on Spot VMs

Automatic and Manual headroom – Pre-allocate capacity for fast scale-up based on historical workload trend data and/or manually specify resource headroom in terms of number of vCPUs number, Memory amount and GPU count for the cluster or workload VNG.

Shutdown hours – Schedule all the nodes in the cluster to shut down at night or on weekends when not being used to save costs.

Restrict scale down – Use restrict-scale-down Spot Label (spotinst.io/restrict-scale-down: “true”) as a pod-level Label to indicate a non-resilient pod that should not be interrupted (drained) and allowed to complete when the node has been selected for scale down during regular bin-packing cycle.

Auto-healing – Automatically launch new node when node status is unhealthy (not responding to health checks), safely drain node, gracefully evict pods and launch replacement node(s).

Everything AKS: Ocean built on AKS building-blocks (node pools)

Ocean AKS supports all standard AKS tools, API, and CLI, to query, update, and manage your AKS cluster. Use Azure portal to monitor, debug, and update your AKS cluster. Continue to use Microsoft support for any AKS issues unrelated to the autoscaling.

Ocean AKS has inherent support for all AKS Storage options (Azure Disk CSI, Azure File CSI, NetApp File, Azure Blob) and AKS Network options (kubenet, Azure CNI, Cilium, Calico).

Leverage AKS Day-2 operations to upgrade your AKS cluster (Kubernetes version upgrade), patch AKS worker node, or auto-update node images. Use your existing automation and third-party tools.

Ocean AKS detects when an AKS upgrade is in progress and will lock the node pools so Ocean autoscaler can continue to scale the cluster without affecting the upgrading node pools. You can view and debug node pools from the Ocean AKS Node Pools Tab.

Test drive the Ocean AKS engine

Don’t get burned out managing your AKS infrastructure yourself and wondering what your next Azure cloud bill will look like. Roll out your AKS application services and save costs on AKS infrastructure with managed Ocean from Spot, the market leader in cloud optimization solutions.

Request a demo with a solution architect.